Scientists on the College of California – Los Angeles (UCLA) have developed an AI-powered “co-pilot” to dramatically enhance assistive units for folks with paralysis. The analysis, carried out within the Neural Engineering and Computation Lab led by Professor Jonathan Kao with scholar developer Sangjoon Lee, tackles a serious situation with non-invasive, wearable brain-computer interfaces (BCIs): “noisy” indicators. This implies the particular mind command (the “sign”) may be very faint and will get drowned out by all the opposite electrical mind exercise (the “noise”), very similar to attempting to listen to a whisper in a loud, crowded room. This low signal-to-noise ratio has made it troublesome for customers to regulate units with precision.

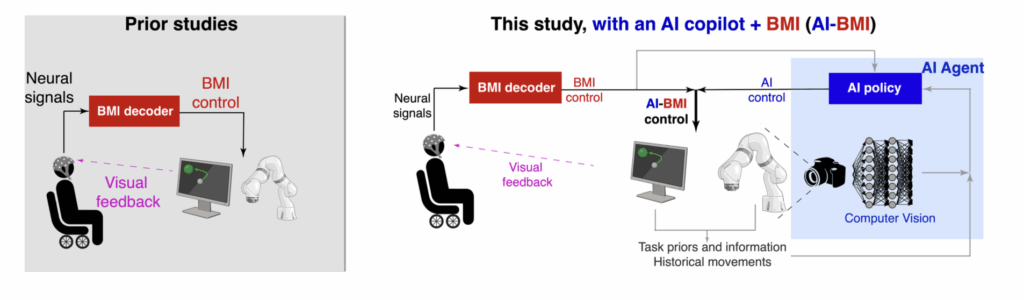

The staff’s breakthrough resolution is an idea referred to as shared autonomy. As an alternative of solely attempting to decipher the person’s “noisy” mind indicators, the AI co-pilot additionally acts as an clever associate by analyzing the atmosphere, utilizing information like a video feed of the robotic arm. By combining the person’s seemingly intent with this real-world context, the system could make a extremely correct prediction of the specified motion. This permits the AI to assist full the motion, successfully filtering by way of the background noise that restricted older methods.

The outcomes of this new strategy are outstanding. In lab checks, members utilizing the AI co-pilot to regulate a pc cursor and a robotic arm noticed their efficiency enhance by practically fourfold. This important leap ahead has the potential to revive a brand new stage of independence for people with paralysis. By making wearable BCI expertise much more dependable and intuitive, it might empower customers to carry out advanced day by day duties on their very own, lowering their reliance on caregivers.

Supply: College of Illinois Urbana-Champaign